Monitoring and Management: Key Considerations for the Modern Data Center10 min read

Last month we covered a very interesting topic – best practices when creating a data center monitoring scheme. It seems like the conversation is far from over, however. Over the past month, I received some great feedback and some wonderful discussions when it comes to actually monitoring and managing the modern data center. After all, your environment has come quite a long way. That extent, there’s an important question to ask: How do data center owners “know” what factors to monitor? Are there any established guidelines that specify the data center environment?

We all know of ASHRAE and their guidelines. However, industry experts will also tell you that data center deployments can be unique to the requirements of the organization. This is even truer today with data centers being used as hubs for big data processing, cloud workloads, virtualization, and much more. Coupled with new types of data center architectures like convergence – you’re seeing new levels of monitoring and management requirements.

Here’s the challenge – It’s always hard to give environmental monitoring best practices only because each data center is differently sized and has different requirements. However, there are core environmental conditions which should be observed. Again, some of these may not apply based on the size and complexity of the infrastructure. That that extent – let’s revisit some of the topics we discussed last month and expand on them a bit.

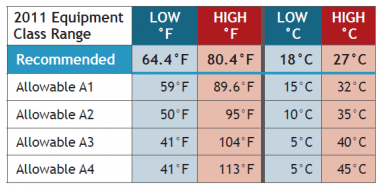

- Temperature. Gauging temperature will always be a key component within any data center environment. When working with temperature ranges, the optimum range for and equipment stability is normally recommended to be between 21 to 23 degrees Celsius (70 to 74 degrees Fahrenheit). However, this can vary depending on the use-case of your data center. In fact, ranges can go from 64.4°F – 80.6°F (18-27°C); again – depending on your specific ecosystem. Consider the following report and graph:

- Humidity. Poor handling of humidity can have very negative results on any datacenter. This is why having a humidity sensor is fairly standard in any sized environment. Relative Humidity (RH) is described as the ratio of moisture in a given sample of air at a given temperature when compared to the maximum amount of moisture the sample could contain at that temperature. The recommended RH should be somewhere between 45% and 60%. This range creates an optimal environment for data center and server equipment to operate.

- Wetness. Dripping water, we floors, and leaks are to be completely avoided in any datacenter. Using wetness sensors can help alert the right administrator to quickly address the issue.

- Airflow. Maintaining good airflow is absolutely vital for both temperature and humidity control. Good airflow recommendations suggest 10 to 13 feet per second. It’s important to try and avoid turbulent airflow as this will be felt as a draught. This is where the size of the environment becomes very important. In a highly dense data center, the number of air changes per hour may be several times greater than that of a smaller environment.

- Rack conditions. Within a rack, it’s important to monitor all of the above components and possible others including – rack entry, thermal mapping, and the recirculation airflow percentage.

- Computer Room Air Conditioner/Handler. The unit that cools and handles room conditions must be observed and monitored as well. This includes supply and return temperatures, internal humidity statistics, and air loss percentages.

- PDU and Electrical Status. The electrical flow of the environment should be monitored thoroughly to maintain optimal performance. This means monitoring the complete branch circuit and the power panel.

Now, there’s a second part to the conversation – integration of environmental monitoring tools and integrating it with overall data center management.

The other question I received was this: How do these all fit together to give companies a complete picture of what’s happening in the data center?

There is no question that large data center environments will have to have clear visibility into their environment. This isn’t just environmental information – this means server metrics as well. There are tools which are able to watch over power consumption, CPU, RAM and other vital components in conjunction with environmental monitoring systems. The true success of any large infrastructure will be the communication between the data center teams. Alerts must go to the correct engineer and manager from server, data center and now virtualization teams all have to coordinate to create an optimally functioning environment. Data center consolidation has been an ongoing initiative with many organizations. This means that larger servers are performing more core functions. All teams with monitoring capabilities must collaborate with one another to create an environmental diagnostics plan should an event occur with any system.

Here’s the best piece of advice – Integration of major systems should be done with a provider capable of handling the environment and organizational needs of the customer and the data center. You don’t have to do this alone.

The health of your data center will depend on the amount of good information flowing into your management framework. Make sure to take into consideration all of the environmental factors within your data center and how they impact the workloads relying on data center resources. This will increase data center health and resiliency, and increase its value to the business.

Airflow Management Awareness Month

Free Informative webinars every Tuesday in June.

0 Comments