3 Data Center Cooling Pieces of Advice You Need to Know10 min read

How ready are you for the data center of tomorrow? New concepts like the ‘Internet of Everything’ are making an impact on data center technologies, delivery methodologies, and most of all – how you control the environmental variables of your entire infrastructure.

Consider the following from Cisco’s latest Cloud Index Report from Cisco:

- Globally, the data created by IoE devices will reach 403 ZB per year (33.6 ZB per month) by 2018, up from 113.4 ZB per year (9.4 ZB per month) in 2013.

- Globally, the data created by IoE devices will be 277 times higher than the amount of data being transmitted to data centers from end-user devices and 47 times higher than total data center traffic by 2018.

- By 2018, 53 percent (2 billion) of the consumer Internet population will use personal cloud storage, up from 38 percent (922 million users) in 2013.

- Globally, consumer cloud storage traffic per user will be 811 megabytes per month by 2018, compared to 186 megabytes per month in 2013.

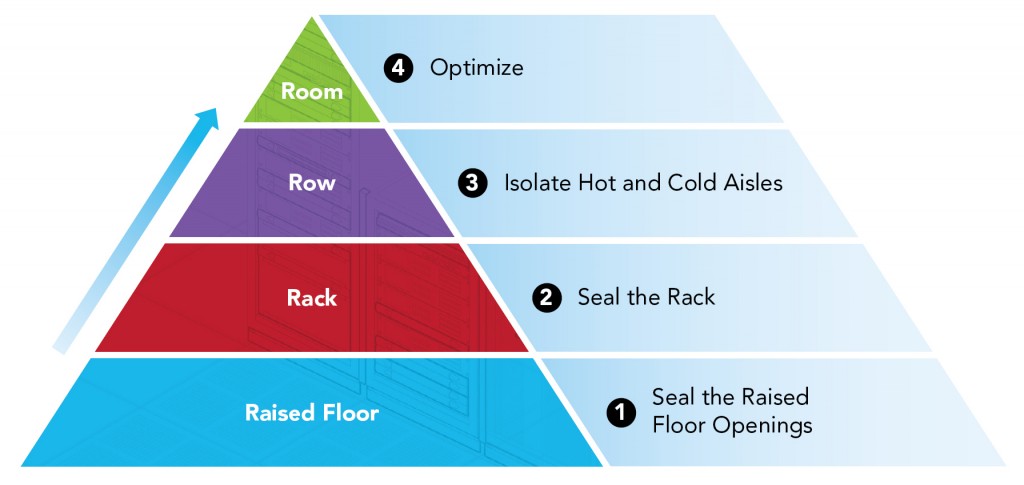

All of these new trends will be impacting your data center cooling landscape – so it’s important to always look at new innovative ways to keep your environment running optimally. In a recent Upsite blog, we looked at the idea of the 4 R’s Methodology (shown below) — Upsite’s very own “Hierarchy of Airflow Management Needs.”

These methodologies introduced a new way of thinking around data center cooling and airflow concepts. Now – we take it a step further.

I had the chance to ask a few industry professionals – as well as data center directors – what they felt was the future of data center cooling. And, how they are preparing their data centers for the airflow challenges of tomorrow.

Lars Strong, Senior Engineer for Upsite Technologies:

- The most valuable advice I have for anyone undertaking an improvement effort is to first identify the utilization of the cooling infrastructure. To quantify both the capacities and loads the cooling infrastructure must deal with. This is the best way to identify the potential improvements that can be achieved. Calculating the cooling capacity factor (CCF), the ratio of total rated cooling capacity to estimated heat load does just this.

- I often see customers (data center managers) get ahead of themselves by implementing advanced methods without optimizing the fundamentals, the foundational layers. For example installing containment without properly managing perforated tile placement. Or implementing detailed measuring and monitoring, DCIM, without first understanding basic metrics.

Bill Kleyman, Director of Strategy and Innovation for MTM Technologies:

- I’ve worked within a number of data center environments and one of the biggest pieces of advice I can give is to always be proactive. Just because your data center doesn’t change in “size” doesn’t mean there isn’t more density to worry about. Too often I’ve seen organizations forget that virtualization and converged platforms are actually creating more cooling and environmental control requirements. Ultimately, this causes gaps in effective cooling and could cost an environment substantially.

- Let me give you another example – I was working with a city municipality a little bit ago. They had some advanced server architectures in their data center but their cooling was way off. Basically, they approved the deployment of new hardware and racks but said that cooling can come in later. Their reason? As the data center admin explained to me – it was budgetary. He assured me that new cooling gear was inbound. Four months later my team was responding to a set of failed chassis units within their racks. They overheated and 5 blades were lost. The customer had yet to upgrade their cooling platform.

Senior Director of Data Center Operations, Large Midwest-based Healthcare Organization:

- We’ve seen the number of concurrent connections jump through the roof. Our doctors, nurses and other associates are all consuming resources way beyond a traditional desktop. Honestly, the best piece of advice I can give is never to forget about cooling your rack. Yes, keeping a complete eye on your entire data center is critical – but what we’ve seen is that your big cooling indicators start within your rack environment. Always maintain good airflow within your rack and don’t be afraid to deploy multiple sensors! This can sometimes mean putting sensors at the top, bottom, and at trouble spots.

- One other thing that we’ve noticed is the amount of heterogeneous IT gear we’ve been deploying. We really don’t have too many racks that look alike. The point is that keeping an eye on the cooling and environmental variables of your rack is critical. Two racks might be the same but the third is completely different. This means sensor placement, how you control airflow intake and even grommet placement must all of accounted for.

With all of this in mind – ask yourself a pretty simple question – how well are you controlling the environmental variables of your data center? Do you know rack-level visibility? Are you capable of dynamically adjusting to cooling changes? In working with industry data center professionals – one of the biggest takeaways really revolves around proactively stopping issues before they become major problems. A lot of times – this means being able to forecast usage changes and truly understanding the operations of your data center.

Here you can create the content that will be used within the module.

1 Comment

Submit a Comment

Airflow Management Awareness Month

Free Informative webinars every Tuesday in June.

This is a good article and needs to be expanded upon. Cooling is vital to the data center and getting the cold air where it needs to go is critical. It is relatively easy to define the cooling requirements of the space and with the newer rack PDUs it makes it easy to get down to the rack level cooling requirements. The rack level is important to keep up to date on the power and cooling needs as this will dictate the need for air flow to the rack. Many of the data centers I have been in struggle with air flow requirements and without properly laying out the data center the whole room struggles with getting the cold air where it is needed. Also, oversizing the cooling can create just as many problems as undersizing the equipment. Always monitor your real time power needs to the rack, which will help you to properly grow the data center with minimal issues. There are many simple, low cost steps that can be taken to ensure your data center can grow as your needs change.