Effects of Data Center Hot Air Re-Circulation15 min read

What is there to say about data center hot air re-circulation? It’s bad. It causes hot spots. It raises the cost of operating a data center. That about covers it, so this should be my shortest blog ever.

While the bottom line effects are pretty straightforward, there are some subtle aspects to these effects, and there are implications for the overall well-being of the data center that are not always clearly understood. We have committed significant virtual and print space, conference podium time, and curriculum critical mass to the sources of re-circulation, cures for re-circulation, and benefits derived from those cures for re-circulation; however, the effects of re-recirculation have for some time been relegated to inference status in discussions focusing on causes and treatments. How about we take some time to remind ourselves why we care about such things?

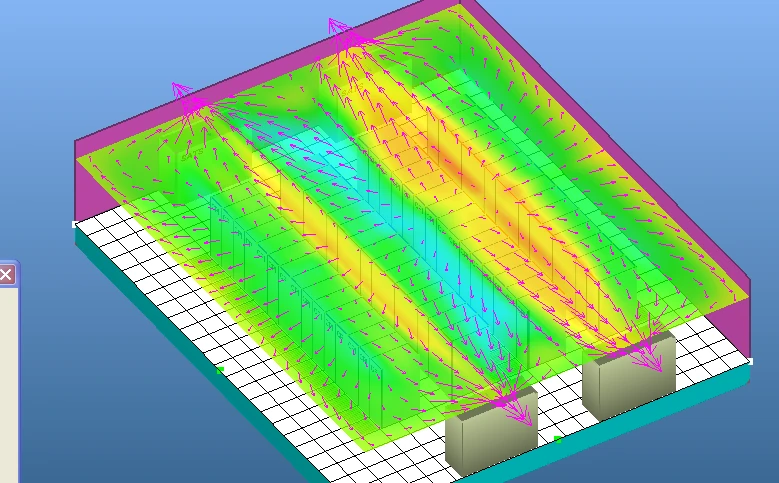

Re-circulation, the condition by which supply air removes heat from IT equipment and is then re-ingested by IT equipment again and sometimes multiple times picking up more heat and all the while increasing in temperature, can follow any number of different paths. A typical path is over the top of cabinets, migrating from the hot aisle to the cold aisle, as in Figure 1. This path of re-circulation is

FIGURE 1: Hot Air Re-circulation over Row into Cold Aisle

frequently caused by improperly located perimeter cooling units, either at ends of cold aisles or perpendicular to the layout of rows and aisles. However, as with many of the paths for re-circulation, this path can be created by an inadequate volume of supply air being delivered into particular cold aisles, in which case the servers will draw in whatever air is available, as illustrated in figure 2.

FIGURE 2: Top of Row Re-circulation Detail

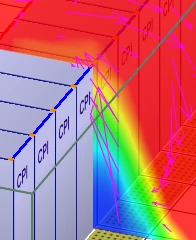

Re-circulation can also occur around the ends of rows, as shown in Figure 3, again generally because of improperly located perimeter cooling units or inadequately supplied cold aisles or at least the end of the cold aisle.

FIGURE 3: End of Row Re-circulation

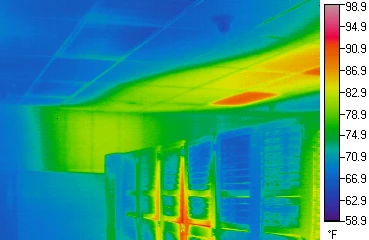

Even when some rudimentary effort at containment is attempted, there can still be less apparent paths for hot air re-circulation. Figure 4 is an infrared camera image of a data center cold aisle showing hot air leakage through the cabinets, because of poorly performing blanking panels and unsealed areas around the side perimeter of the server equipment, and through leaks in the ceiling contained return air path.

FIGURE 4: Infrared Thermal Image of Leakage Re-circulation

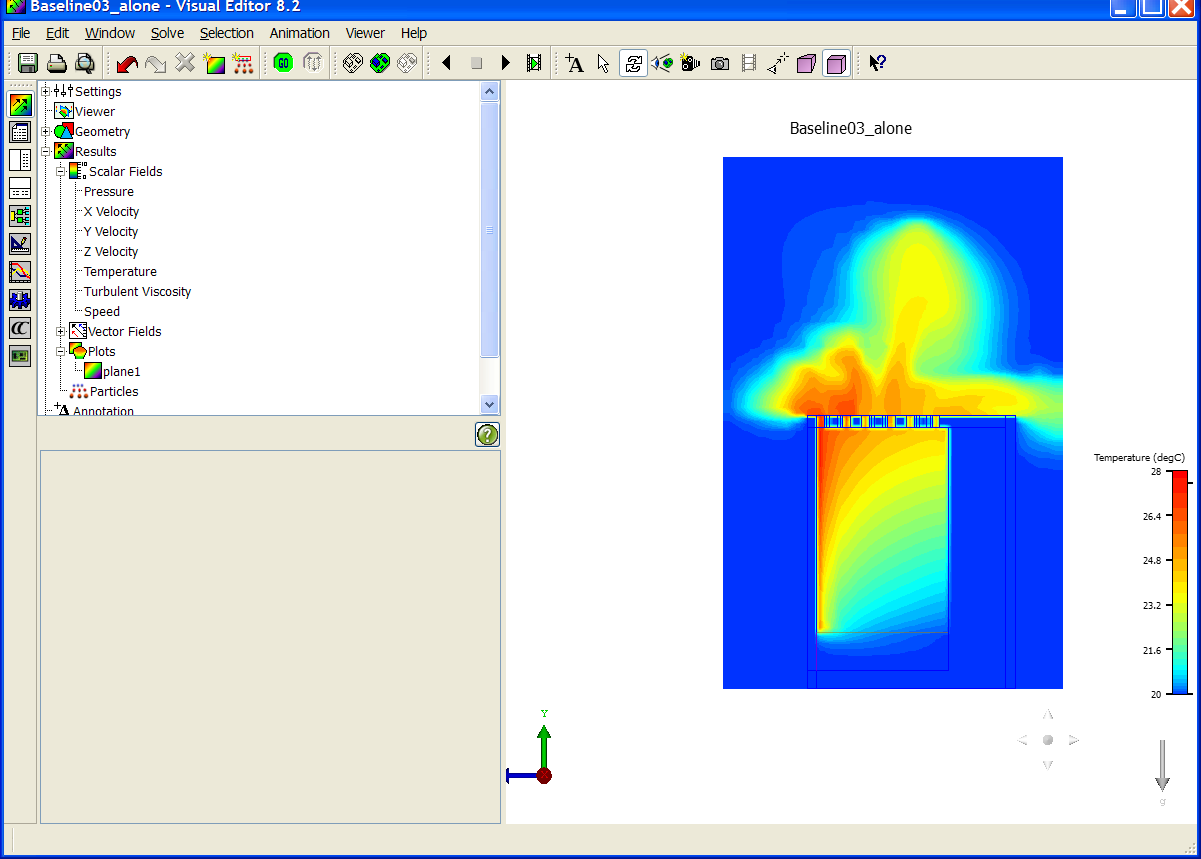

There are more specific paths of hot air re-circulation, as well. Storage arrays in cabinets with top mounted fan trays hearken back to the hot aisle-cold aisle nemesis of server cabinet fan kits from 15-20 years ago. The computational fluid dynamics model in Figure 5 shows how these configurations make no distinction between a hot aisle and a cold aisle and can be particularly problematic to a containment initiative.

FIGURE 5: CFD Model of Top of Rack Fan Tray

Finally, we do not see too many of the historically randomly organized data centers such as the chaotic little room in Figure 6, but obviously, these arrangements with some hot aisle/cold aisle organization and some military formation organization and some total lack of organization will create paths for multiple cycles of re-circulation.

Figure 6: Blast from the Past

From all the previous images, it is clear that one of the effects of data center hot air re-circulation is hot spots as if that even needed to be stated. However, Mr. Data Center Professional, hot spots in most situations may very well be the least of your concerns. After all, as I am sure you recall, my blogs titled “Airflow Management Considerations for a New Data Center – Part 2: Server Performance versus Inlet Temperature,” from May 17, 2017 and “Airflow Management Considerations for a New Data Center – Part 6: Server Reliability versus Inlet Temperature,” from July 12, 2017, made it abundantly clear that there were not only no good reasons to avoid 95˚F inlet temperatures for most servers, there can actually be good reasons to allow temperatures to occasionally drift into the upper ranges of the manufacturers’ environmental operating specifications. If we consider most situations of re-circulation, we will see some mixing of re-circulated return air and supply air. Therefore, we might expect to see 70˚F supply being contaminated by 90˚F re-circulated return resulting in a mixed supply around 80˚F. We could see another whole cycle of re-circulation along those terms without exceeding the manufacturers’ specifications for most equipment. Of course, in train wrecks like the blast from the past in Figure 6, where return, already elevated as a result of some re-circulation on the supply side, is being directly ingested by server in-take fans, it is entirely possible to experience at least some isolated instances of re-circulation exceeding allowable thresholds. Nevertheless, in general, hot spots are an obvious and typical effect of data center return re-circulation, but they may not be the disaster they were 10-15 years ago.

With today’s computing equipment and the associated energy budgets for data centers, higher cooling costs may be a more critical effect of re-circulation than hot spots. If return re-recirculation is resulting in some servers seeing intake air temperatures 25˚F higher than what is being supplied off the cooling coils, then obviously that supply temperature is going to need to be significantly lower than if the highest intake temperature was only 3˚F higher than the supply coming off the cooling coils. For anybody who is counting, that would be a 22˚F decrease in supply temperature compared to a data center with almost no re-circulation. That lower supply temperature results in an unnecessary chiller plant operation expenditure for 980,000 kW/H of electricity for 1MW of IT load, or 4.9 million kW/H of unnecessary electricity for cooling 5MW or 9.8 million kW/H for cooling 10MW. At $0.10 per kW/H electricity cost, that unnecessary cooling cost for a 1MW data center could have bought over sixty new servers, perhaps to replace any that may have croaked in the heat or, conversely, to keep up with the business expansion of such a well-managed enterprise. While over-heated servers may be more embarrassing and more unwanted attention-grabbing than a high electricity bill, the expense for re-cooling recirculated return air will usually have a more profound negative bottom-line impact than overheated servers.

Another aspect of higher cooling costs generated from compensating for re-circulated return air is reduced access to free cooling and the resultant higher mechanical plant costs. Table 1 provides some examples of costs to operate the standard mechanical plant instead of two different types of economizers for five significant data center hubs at the lower temperature to compensate for return air re-recirculation, using the same parameters I used in the chiller plant discussion in the preceding paragraph. Another way to put these numbers into perspective besides the servers that could have been purchased consider that the cost for cooling this example data center in San Jose to the ASHRAE recommended guidelines (80.6˚F maximum) when airside economization could have been available would equate to losing a $7 million customer. Data center return air re-circulation is costly.

| Cost of Lost Free Cooling Due to Re-Circulation for 5MW IT Load | ||||

| ASHRAE Recommendation | Manufacturers’ Specs | |||

| Indirect Evap | Air | Indirect Evap | Air | |

| Chicago | $512,400 | $566,020 | $352,240 | $282,380 |

| Dallas | $567,280 | $611,100 | $699,580 | $545,720 |

| Denver | $447,580 | $404,440 | $111,440 | $209,020 |

| Reston | $589,400 | 4486,640 | $417,620 | $348,180 |

| San Jose | $504,420 | $684,320 | $135,100 | $219,800 |

Accommodation strategies for re-circulation in the data center are not limited to compensating with lower temperature set points. Bypass airflow is another frequent effect of re-circulation as supply air volumes can be ramped up to ward off incursions by re-circulation. For example, with end-of-row re-circulation as illustrated above in Figure 3, a bypass can keep that warm air out of the cold aisle in a couple of different ways. One approach could be to just over-produce supply air volume, so those floor tiles near the end of the aisle are producing adequate amount to divert the return air away from the cold aisle. In this approach, the volume of supply air toward the middle of the cold aisle may far exceed demand and return to the cooling units without picking up and removing any heat. Another approach to using bypass to combat end-of-row re-circulation would be to add some extra perforated floor tiles or grates beside the last cabinet in the row or even at the end of the hot aisle to effectively act as a cold air blockade to return air incursion. In either case, re-circulation has been avoided, but fan energy has been increased. The math of fan affinity laws (cube effect) works against us just as it works for us, so if we needed to produce an extra 20% of bypass airflow to combat some instances of re-circulation, we have increased our cooling fan energy demand by 78% (1.23). Besides the non-linear energy cost increases, this need for bypass airflow could necessitate acquisition costs for additional cooling equipment that should not otherwise be needed. The same dynamics and math would apply to bypass airflow combatting re-circulation over the tops of rows of cabinets.

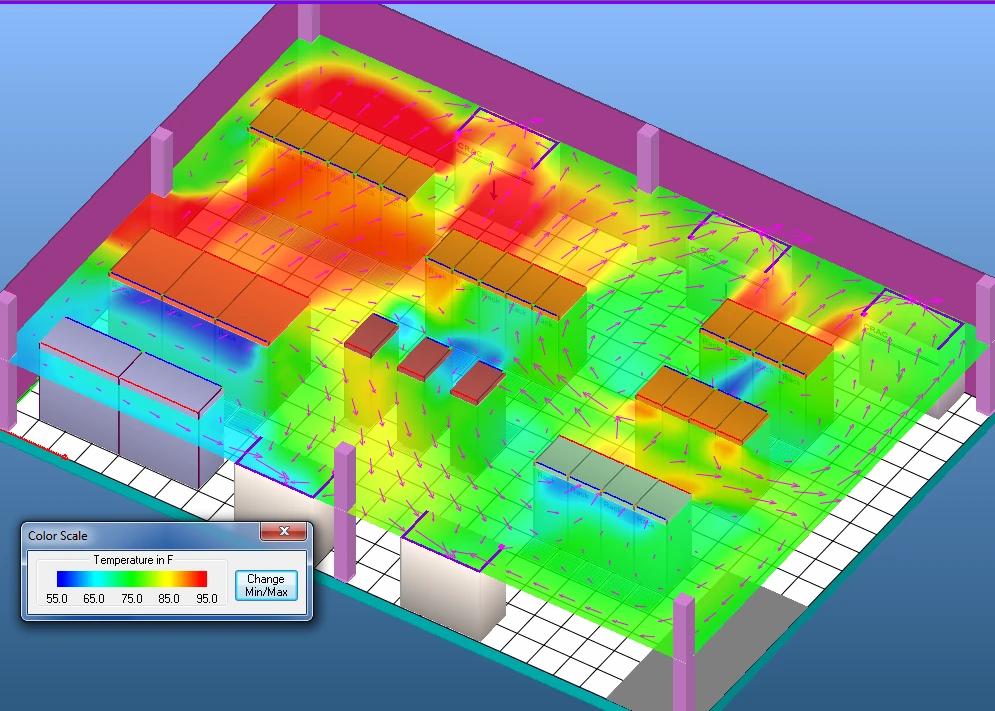

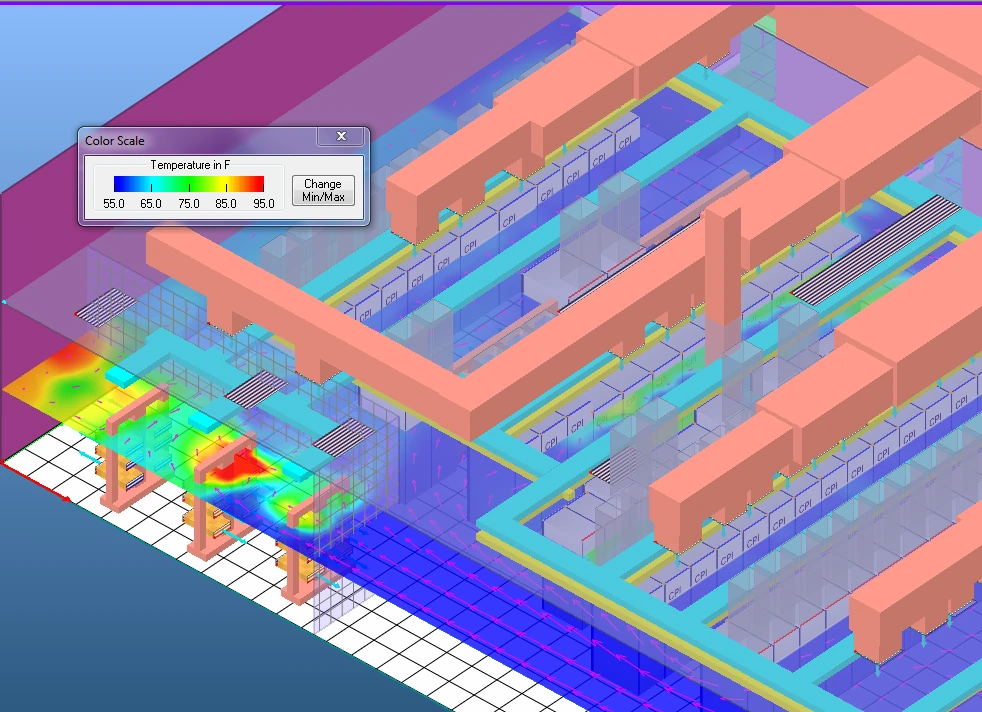

Another element of data center return air re-circulation is that this can be one of those tales about the kingdom being lost for want of a nail, as one small spot with re-circulation can undermine the whole data center. Figure 7 provides a perfect example of how a little chink in the armor can defeat a much larger data center space. In this case, there is re-circulation from side-breathing switches in an entrance cage that forces a reduction in chiller plant temperature affecting the entire data center, and the resultant price tag is for cooling the whole space, not just that small corner.

FIGURE 7: Effect of Small Pocket of Re-circulation

Finally, re-circulation can give us a false sense of security about how well we are doing in managing our space. A very common benchmark for doing a quick check of data center efficiency is the ΔT at our cooling units. The rule of thumb is that the more significant the difference between the supply air temperature and the return air temperature is, the greater is our cooling unit efficiency. In theory, that may be true as that means we are removing more heat per cooling unit. However, if our IT equipment has an average ΔT of 20˚F and our cooling equipment is seeing a 30˚F ΔT, then we are removing the kind of heat we get by leaving the door open during our wonderful Texas summers down here.

Conversely, re-circulation could mask a severe bypass problem. Instead of intentionally creating a certain amount of bypass to compensate for re-circulation, I have seen instances where bypass and re-circulation existed side-by-side and went undetected because the IT equipment was not complaining and the ΔT back at the cooling equipment seemed to approximate what was expected to be seen across the IT equipment. Of course, PUE will rat out these excesses in the blink of a calculate button. Nevertheless, without an umbrella efficiency metric like PUE, re-circulation can efficiently mask bypass inefficiencies.

Ian Seaton

Data Center Consultant

Let's keep in touch!

Airflow Management Awareness Month

Free Informative webinars every Tuesday in June.

0 Comments