Airflow Management Through the Years – Part 3: Environmental Monitoring15 min read

This is the 3rd installment of our 3-part series titled: Airflow Management Through the Years. To read the previous installment click here.

Prior to the migration of airflow management from an effectiveness booster (eliminate hot spots and support higher densities) to an energy efficiency booster, environmental monitoring in the data center was typically non-networked temperature and humidity (checking to identify hot spots and respond with reduced set points). With the shift in focus to energy efficiency, environmental monitoring began a path to greater sophistication and integration of IT to the mechanical plant. Static pressure sensors were used to maintain minimum acceptable static pressure differences between supply and return masses as opposed to maintaining maximum underfloor pressure to assure adequate flow though all perforated floor tiles. Pressure sensors became more routinely integrated to building management systems controlling cooling unit fan speeds. Temperature sensors began being used to monitor rack cooling indexes and allow controlling rack cooling indexes to smaller standard deviations than would otherwise consume the full range of the ASHRAE recommended server inlet temperature or site service level agreement (SLA).

As control and monitoring technologies became more sophisticated and widely deployed and the understanding of the relationship between good airflow management in the data center and operating efficiency gained traction, standards and best practice guidelines began to exploit the improved capabilities and growing sensitivity. For example, the 2008 ASHRAE TC9.9 environmental guidelines expanded the recommended range for server inlet temperatures to 64.4-80.6⁰F and then in 2011 established four categories of servers with allowable temperature ranges of 59-90⁰ up to 41-113⁰F, and further defined dew point levels for humidity and allowable rates of temperature change.

The major presumptions for these new standards were that data center operators were concerned about improving their energy efficiency and had access to tools that would allow them to manage airflow in their spaces to fully take advantage of the new standards. ASHRAE 90.1, Energy Standard for Buildings except Low Rise Residential in 2010 eliminated the process exemption for data centers and added proscriptions for economization, variable flow on fans, and restrictions on humidity management as a reflection of evolving best practices for data center airflow management. Since ASHRAE 90.1 is the energy calculation foundation for LEED energy savings credits, this change resulted in eliminating the troubling irony associated with LEED certified data centers so IT load and associated mechanical plant and electrical distribution were included in data center energy calculations for LEED credits in 2013. Furthermore, since ASHRAE 90.1 is the basis for municipal and state building and energy codes, these airflow management best practices were becoming codified at local and state levels, such as California Title 24, which further added a requirement in 2014 for a physical barrier between supply air and return air spaces in the data center.

As data center airflow management reached mainstream status in the past few years, the evolution of this field has focused on fine-tuning all the developments of the preceding decade. Tate Access Floor published results of studies that showed servers themselves as a path for bypass air with inadequately managed pressure differentials between spaces inside contained aisles and spaces outside contained aisles. Chatsworth Products published results of experiments showing air handler actual energy use at different leakage levels of containment. These differences have driven development of a wide range of air sealing accessories for inside server cabinets, between server cabinets, and between server cabinets and other containment barriers.

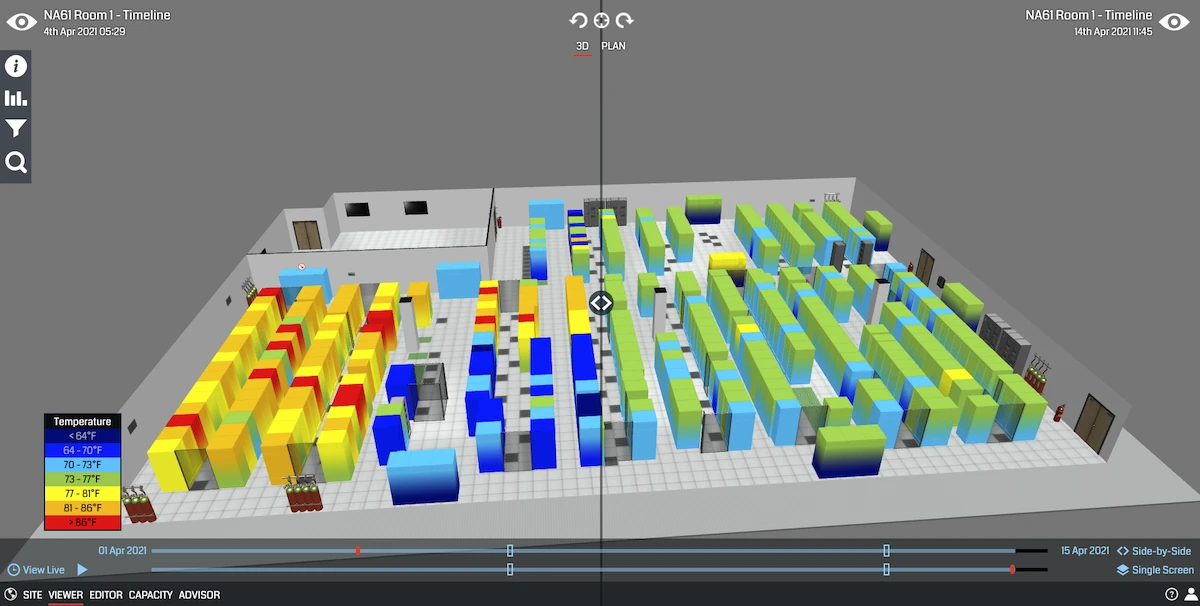

These differences have further contributed to the development of management tools such as online DCIM and integration of CFD with environmental monitoring and aspects of DCIM. More recently, advancements in artificial intelligence and machine learning have contributed to an immersive environmental monitoring experience with insights aimed at optimizing the cooling infrastructure. Utilizing emerging technologies like these will be the next step in airflow management to help visualize airflow improvements, analyze data being collected from sensors, and provide advisement on cooling optimization decisions to ultimately improve cooling efficiency.

Conclusion

Today, large data center operators and professional data center designers are tuned in to the values of airflow management and its role in contributing to improved operating efficiency. This knowledge is now widespread in the industry, including those operating smaller data centers and computer rooms, regardless of actual degrees of implementation of best practices. So now the question that remains is, where do we go from here? Perhaps we will see the elimination of airflow management as an issue at all with the advent of direct contact liquid cooling technologies. Or, if we should see the deployment of cost-effective servers that can operate at 104⁰ or 113⁰F, as defined by ASHRAE server types A3 or A4, we might no longer be concerned with any airflow management practices other than being sure excessive temperatures are evacuated from the space. For the time being, we have seen approximately 20 years of evolution of the concept of hot aisle/cold aisle separation, resulting in data centers operating at much higher densities and higher mechanical efficiency levels.

Real-time monitoring, data-driven optimization.

Immersive software, innovative sensors and expert thermal services to monitor,

manage, and maximize the power and cooling infrastructure for critical

data center environments.

Real-time monitoring, data-driven optimization.

Immersive software, innovative sensors and expert thermal services to monitor, manage, and maximize the power and cooling infrastructure for critical data center environments.

Ian Seaton

Data Center Consultant

Let's keep in touch!

0 Comments